1:21:28

1:21:28

2025-03-12 14:13

1:10:32

1:10:32

1:10:32

1:10:32

2025-05-27 16:05

1:56:57

1:56:57

1:56:57

1:56:57

2026-04-19 21:00

11:52:60

11:52:60

11:52:60

11:52:60

2025-01-24 09:38

3:08:44

3:08:44

3:08:44

3:08:44

2025-06-28 22:00

3:01:31

3:01:31

3:01:31

3:01:31

2025-10-02 19:15

2:21:38

2:21:38

2:21:38

2:21:38

2023-09-30 22:52

5:37:49

5:37:49

5:37:49

5:37:49

2013-01-18 02:37

11:45:59

11:45:59

11:45:59

11:45:59

2025-06-02 05:45

2:43:52

2:43:52

2:43:52

2:43:52

2025-04-20 21:25

3:19:58

3:19:58

3:19:58

3:19:58

2023-11-04 16:46

4:20:01

4:20:01

4:20:01

4:20:01

2025-10-15 21:45

1:57:17

1:57:17

1:57:17

1:57:17

2025-12-20 21:00

2:03:40

2:03:40

2:03:40

2:03:40

2023-10-21 21:37

9:11:20

9:11:20

9:11:20

9:11:20

2024-09-12 07:50

2:33:30

2:33:30

2:33:30

2:33:30

2023-09-10 20:33

2:10:28

2:10:28

2:10:28

2:10:28

2025-12-17 16:08

44:36

44:36

![Tural Everest - Блестящая (Премьера клипа 2026)]() 2:44

2:44

![Абдурауф Шайназаров - Давримни сурайин (Премьера клипа 2026)]() 3:04

3:04

![AY YOLA - Aihylyu (Премьера клипа 2026)]() 3:49

3:49

![Ярослав Леонов - Твори добро (Премьера 2026)]() 3:13

3:13

![Альберт Эркенов - Непокорная (Премьера клипа 2026)]() 4:04

4:04

![Zhamil Turan - Танцуй (Премьера клипа 2026)]() 2:59

2:59

![Dzhenis - Ближе ближе (Премьера 2026)]() 3:17

3:17

![Ислам Итляшев - Вижу ее (Премьера клипа 2026)]() 2:50

2:50

![NITI DILA - Здесь хорошо (Премьера клипа 2026)]() 2:09

2:09

![Шохжахон Жураев - Дилором (Премьера клипа 2026)]() 6:09

6:09

![Рейсан Магомедкеримов - Отец (Премьера клипа 2026)]() 3:28

3:28

![Zarina - Jojji Qaram (Official Video 2026)]() 3:15

3:15

![Мухаммадзиё - Севишсак севишиб куёрасизми (Премьера клипа 2026)]() 3:44

3:44

![Мужик из Сибири (Александр Конев) - Хотеть не вредно (Премьера клипа 2026)]() 2:31

2:31

![NAIMAN - На волне (Премьера клипа 2026)]() 2:13

2:13

![Эльдар Агачев - У дома (Премьера клипа 2026)]() 2:59

2:59

![SEREBRO - Кто я для тебя (Премьера клипа 2026)]() 3:15

3:15

![Zhamil Turan - Осколки (Премьера клипа 2026)]() 3:33

3:33

![ARi SAM Vii - Я обиделась (Премьера клипа 2026)]() 2:45

2:45

![Ярослав Леонов - Королева весны (Премьера 2026)]() 3:45

3:45

![Анаконда | Anaconda (2025)]() 1:38:55

1:38:55

![Аватар: Пламя и пепел | Avatar: Fire and Ash (2025)]() 3:17:12

3:17:12

![Безымянная романтическая история о вторжении в дом | Untitled Home Invasion Romance (2025)]() 1:25:48

1:25:48

![28 лет спустя: Часть II. Храм костей | 28 Years Later: The Bone Temple (2026)]() 1:49:24

1:49:24

![Обитель зла: Возмездие | Resident Evil: Retribution (2012)]() 1:35:41

1:35:41

![Острые козырьки: Бессмертный человек | Peaky Blinders: The Immortal Man (2026)]() 1:54:08

1:54:08

![Лакомый кусок | The Rip (2025)]() 1:52:50

1:52:50

![Сестра | Siseuteo (2026)]() 1:26:45

1:26:45

![Зомби по имени Шон | Shaun of the Dead (2004)]() 1:39:31

1:39:31

![В мгновение ока | In the Blink of an Eye (2026)]() 1:34:15

1:34:15

![Майк и Ник и Ник и Элис | Mike & Nick & Nick & Alice (2026)]() 1:47:10

1:47:10

![Я иду искать 2 | Ready or Not 2: Here I Come (2026)]() 1:47:56

1:47:56

![Пицца фильм | Pizza Movie (2026)]() 1:37:12

1:37:12

![Проект «Конец света» | Project Hail Mary (2026)]() 2:36:35

2:36:35

![Прыгуны | Hoppers (2026)]() 1:36:21

1:36:21

![Обитель зла 2: Апокалипсис | Resident Evil: Apocalypse (2004)]() 1:37:50

1:37:50

![Zомбилэнд: Контрольный выстрел | Zombieland: Double Tap (2019)]() 1:39:05

1:39:05

![Элла Маккей | Ella McCay (2025)]() 1:54:47

1:54:47

![Грандиозная подделка | Il falsario (2025)]() 1:55:41

1:55:41

![Обитель зла: Последняя глава | Resident Evil: The Final Chapter (2016)]() 1:46:38

1:46:38

![Гордость и предубеждение | Pride & Prejudice (2005)]() 2:08:21

2:08:21

![MAUR - Полетела (Премьера клипа 2025)]() 2:53

2:53

![Bakhtin - Целовала (Премьера клипа 2023)]() 3:16

3:16

![_*ДискотекА 80-90х ВиДео АлЬбом Лучшие.*_]() 2:40:60

2:40:60

![Давид | David (2025)]() 1:49:18

1:49:18

![Барбоскины. Сезон 1. Серия 1. Пчёлка]() 5:38

5:38

![Сборник Новогодняя Десятка - Уральские Пельмени]() 1:19:08

1:19:08

![Зверополис 2 | Zootopia 2 (2025)]() 1:47:36

1:47:36

![Зверополис | Zootopia (2016)]() 1:48:48

1:48:48

![Алдан (2025)]() 1:38:04

1:38:04

![Лунтик | Танцы 💃💃💃 Сборник мультиков для детей]() 46:30

46:30

![Ми–Ми–Мишки 💫 Звездная история 🙃 Все серии ✨ Мультики для детей]() 2:10:31

2:10:31

![Сборник Топ 20 Номеров за 2024 год - Уральские Пельмени]() 2:52:30

2:52:30

![Снова в деле (2025) Netflix]() 1:54:23

1:54:23

![Форсаж 6 | Furious 6 (2013)]() 2:11:07

2:11:07

![ХИТЫ 2025 ТАНЦЕВАЛЬНАЯ МУЗЫКА СБОРНИК]() 1:41:18

1:41:18

![Кей-поп-охотницы на демонов | KPop Demon Hunters (2025)]() 1:39:41

1:39:41

![Рыцарь семи королевств. Все серии]() 3:28:06

3:28:06

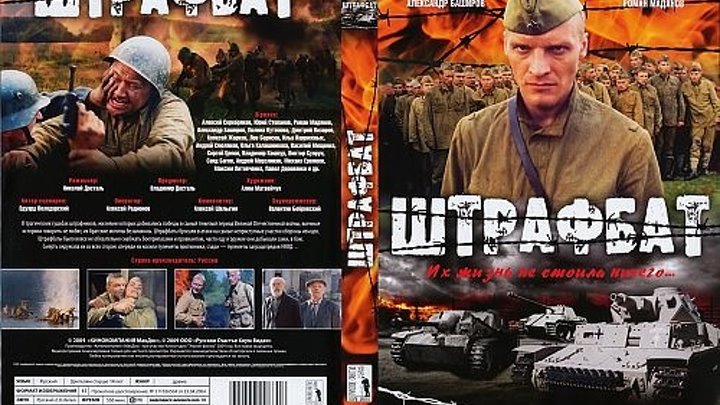

![Штрафбат(1-11 серии) HD 2004]() 8:05:56

8:05:56

![Пять ночей с Фредди 2 | Five Nights at Freddy's 2 (2025)]() 1:44:11

1:44:11

44:36

44:36Скачать Видео с Рутуба / RuTube

| 240x144 | ||

| 400x240 | ||

| 608x360 | ||

| 808x480 | ||

| 1208x720 | ||

| 1816x1080 |

2:44

2:44

2026-04-10 09:57

3:04

3:04

2026-04-08 11:38

3:49

3:49

2026-04-20 16:46

3:13

3:13

2026-04-01 15:22

4:04

4:04

2026-04-10 10:01

2:59

2:59

2026-04-15 12:45

3:17

3:17

2026-04-10 09:39

2:50

2:50

2026-04-09 09:20

2:09

2:09

2026-04-10 09:36

6:09

6:09

2026-04-20 16:56

3:28

3:28

2026-03-31 11:00

3:15

3:15

2026-04-20 17:11

3:44

3:44

2026-04-04 11:50

2:31

2:31

2026-04-08 11:47

2:13

2:13

2026-04-14 08:26

2:59

2:59

2026-03-29 16:26

3:15

3:15

2026-04-03 09:24

3:33

3:33

2026-03-26 13:34

2:45

2:45

2026-04-12 10:21

3:45

3:45

2026-04-01 14:58

0/0

1:38:55

1:38:55

2026-01-28 12:07

3:17:12

3:17:12

2026-04-02 11:34

1:25:48

1:25:48

2026-02-26 14:41

1:49:24

1:49:24

2026-02-19 14:08

1:35:41

1:35:41

2026-02-25 19:41

1:54:08

1:54:08

2026-04-13 12:20

1:52:50

1:52:50

2026-02-04 10:11

1:26:45

1:26:45

2026-03-27 13:34

1:39:31

1:39:31

2026-02-16 01:07

1:34:15

1:34:15

2026-03-01 21:54

1:47:10

1:47:10

2026-04-03 12:10

1:47:56

1:47:56

2026-04-12 17:20

1:37:12

1:37:12

2026-04-06 12:20

2:36:35

2:36:35

2026-04-11 16:06

1:36:21

1:36:21

2026-03-27 13:35

1:37:50

1:37:50

2026-02-25 19:41

1:39:05

1:39:05

2026-02-16 01:07

1:54:47

1:54:47

2026-02-11 21:47

1:55:41

1:55:41

2026-02-26 14:41

1:46:38

1:46:38

2026-02-25 19:41

0/0

2:08:21

2:08:21

2023-05-03 20:56

2:53

2:53

2025-04-24 09:53

3:16

3:16

2023-10-13 14:26

2024-03-18 17:25

1:49:18

1:49:18

2026-01-29 11:25

5:38

5:38

2023-12-25 15:26

2026-01-01 13:59

1:47:36

1:47:36

2025-12-25 17:49

1:48:48

1:48:48

2024-12-16 19:01

1:38:04

1:38:04

2026-03-26 23:45

2024-08-05 22:22

2024-01-17 17:34

2025-01-13 14:00

1:54:23

1:54:23

2025-01-18 20:05

2:11:07

2:11:07

2023-04-25 22:52

2024-06-25 00:21

1:39:41

1:39:41

2025-10-29 16:30

3:28:06

3:28:06

2026-02-24 11:12

8:05:56

8:05:56

2017-07-08 19:33

1:44:11

1:44:11

2025-12-25 22:29

0/0